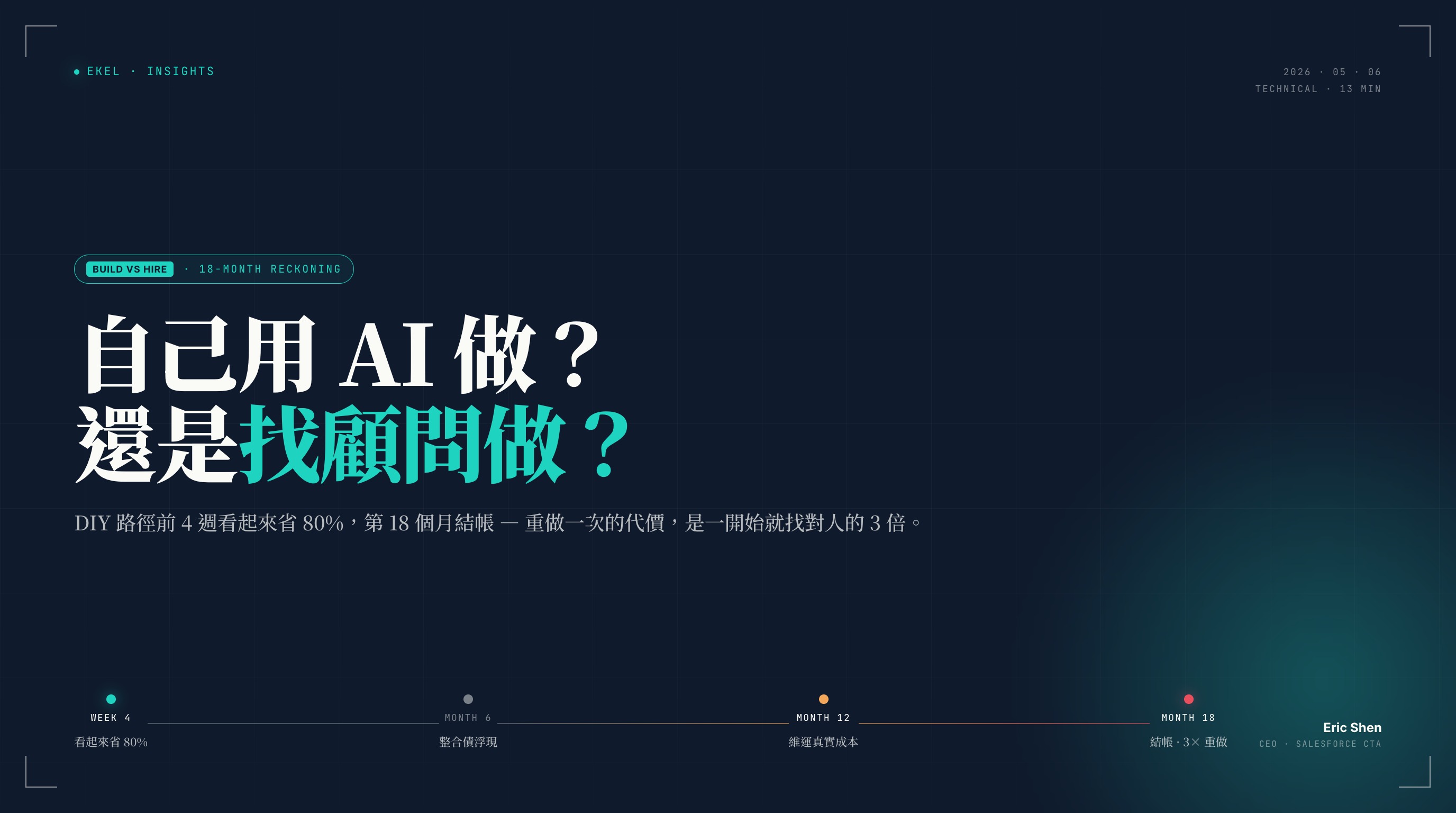

DIY With AI, Or Hire Consultants? The 18-Month Cost Sheet for Custom Enterprise Apps

The DIY path looks 80% cheaper at week 4. The bill comes due at month 18 — and rebuilding then costs three times what hiring the right people up front would have.

Summary

- Core thesis: DIY building a custom enterprise app with AI looks like it saves 80% of the cost in the first 4 weeks. The real bill arrives at month 18 — and rebuilding then costs 3× what hiring the right people up front would have. AI didn't make development cheaper. AI deferred the cost, with interest.

- Same tool, different driver: Experts use AI as a continuous correction loop — "no, that query falls over at 100k rows"; "no, that useEffect creates an infinite loop." Non-experts use AI as guided navigation: whatever AI suggests, they ship. The deadliest failure mode is AI being wrong while looking right; only experts catch it.

- What AI still can't do isn't technical, it's accountability: production SLA, compliance audits, handoff after the original engineer leaves, the customer call when escalation hits — none of these are things AI does. When you hire a consultancy, those four things are what you're actually buying. Code AI can write.

- It is not "outsource everything" — it is "outsource what should be outsourced": your engineers should write the IP only you can write — the differentiating core. Generic back-offices, customer portals, HR tools — these have proven best practices. The expensive thing in the AI era isn't engineers; it's misallocated engineer time.

1. The Familiar Weekly Meeting

You have probably seen this scene.

Weekly meeting. The IT lead opens a slide: "This isn't complex — internal back office plus a customer query feature. We've got Cursor and Claude Code, two senior engineers can handle it internally, save the NT$6M outsourcing quote." The CEO nods. The CFO crosses a line item out. Decision made.

It looks rational. It looks frugal. The internal team "knows the business best."

The problem is: you can't tell whether this decision was right or wrong until eighteen months later. And by then, rebuilding it costs three times what hiring the right people up front would have.

This essay walks the timeline — week 4, month 6, month 12, month 18 — and shows what the DIY path runs into at each stage. It also explains why this path becomes more dangerous in the AI era, not safer.

2. Why The Decision Looks So Reasonable

Let me concede the obvious: the appeal of DIY-with-AI is real, not imagined.

- AI tooling is genuinely cheap. USD 50 to 200 per engineer per month subscription cost. Compared to a NT$6M outsourcing quote, the numbers aren't even in the same league.

- The internal team has context. They know the business, they know what the boss prefers, they know which department uses which legacy system. That context is real, and external consultants do need time to build it.

- No procurement friction. RFP, comparison bids, contracts, POs — these things genuinely slow decisions down. Build it internally, and code starts shipping next week.

All three are true. All three are half-true.

They are true if your viewpoint is day 1 to day 30. They are wrong if your viewpoint is day 31 to day 540. The rest of this essay walks those 540 days.

3. Week 4 — Looks Like 80% Saved

Four weeks in, the team ships a demo. Login page, dashboard, main flow — everything runs. The UI is unexpectedly handsome — AI has opinions about visuals; what it lacks is opinions about your business.

The CEO is impressed. The IT lead takes credit at the next weekly meeting. The CFO starts thinking next year's IT budget should lean more into in-house builds.

Pin one idea here: that's not a system, that's a demo.

A demo runs the happy path. A system has to last eighteen months — employees still using it, audits still clearing, compliance not exploding. Demos are for executives. Systems are for the 200 people who use them every day. Demos run on clean test data. Systems face five years of accumulated dirty history, three new subsidiaries from this year's M&A, and three different fiscal-year carryovers.

The distance between those two things looks like "a few more sprints." It is actually twelve months of engineering discipline — discipline AI doesn't write into your codebase. Discipline only experience designs in.

4. Month 6 — Integration Debt Surfaces

End of Q2. The first wall the DIY path hits is called integration debt.

AI is great at writing self-contained features. The problem is enterprise systems almost never have self-contained features — every system has to talk to the rest of the stack: SSO, ERP, the data warehouse, the finance system, the existing permissions model, the existing customer master.

And AI doesn't know:

- How your SSO is configured — OIDC or SAML, which scopes are exposed

- Your row-level security rules — which department sees which records

- How your ERP primary keys map to the new system, and whether you need write-back

- The edge cases of your business logic: "VIP customers are an exception", "year-end carryover", "how to migrate historical data", "how to handle records owned by departed employees"

The dangerous part isn't that AI can't write it. The dangerous part is that AI guesses a plausible version and writes that. Types pass. Build passes. Tests pass. Production hits the first timezone-aware row of real data and explodes.

The internal team starts spending its time not on new features, but on debugging what AI wrote. Writing speed lags behind editing speed. The AI accelerator starts working in reverse.

5. Month 12 — The True Cost Of Ownership Lands

End of year one. The question now isn't "can you write it?" — it's "can you keep it running?"

The original engineers left. The two senior engineers — one jumped to a startup, one rotated to AI work — left behind a codebase with no ADRs, no tests, comments that read like AI talking to itself. The next engineer who picks it up spends two weeks reading and concludes: "Either we rewrite this, or it'll cost me three months to fully understand it."

No on-call rotation. Production errors at 2 a.m. with no alerts firing. By 9 a.m. customer support has 30 calls — that's when someone notices data hasn't been writing since 11 p.m. last night.

Compliance and audit problems surface. The accounting firm runs annual checks and finds: PII fields aren't encrypted, log retention policy is missing, audit trail has gaps. The CFO is asked to explain — and the people who could explain have already left.

Pin a second idea: *AI made writing code cheap; it made understanding* code expensive**. AI generated three times the code volume of the past, but the number of people who can read it, debug it, and extend it didn't grow. Codebase maintainability is something AI can't write in — that's conventions, ADRs, review culture, observability. It's something you put in on day 1, not patch in at month 12.

6. Month 18 — The Reckoning

By month 18, this system either gets rewritten, or you allocate budget to "formalize" it — backfill tests, backfill docs, backfill observability, backfill compliance, backfill a team that can maintain it.

The CFO puts the three paths on the table:

| Phase | Traditional outsourcing | DIY + AI | Right consultant |

|---|---|---|---|

| Months 1–3 build | NT$6M | NT$0.8M | NT$3.6M |

| Months 4–12 maintenance | NT$1M | NT$2.8M | NT$0.8M |

| Months 13–18 rebuild | 0 | NT$5M | 0 |

| 18-month total | NT$7M | NT$8.6M | NT$4.4M |

(Numbers are anonymized composites from observed engagements, not a single real client. Actual ranges vary by industry and complexity.)

Pin a third idea: DIY isn't saving money. It's deferring the bill, with interest.

This table doesn't even count two more expensive things: (1) the features the business didn't ship in those 18 months, the differentiation that didn't get built; (2) the morale erosion on the internal team, the loss of confidence from business units, the executives who no longer expect IT to deliver.

7. So What's The Watershed? — Expert vs Non-Expert AI Use

Many readers reach this point and ask: "Wait — our internal engineers are senior too. Why would the DIY result be that bad?"

The answer is: the difference isn't in the tool. It's in the driver.

Experts use AI as a correction loop:

- "AI, this query becomes unusable at 100k rows — rewrite it"

- "AI, this useEffect dependency array creates an infinite loop — fix it"

- "AI, this try-catch silent-fails — re-throw the error"

- "AI, I can't find this API in the docs — did you hallucinate it?"

Every interaction corrects the direction. AI is the tool being driven.

Non-experts use AI as guided navigation:

Whatever AI suggests, they ship. AI says "I'm not sure but typically people write this" — they ship that too. AI writes a call to an external API; it looks reasonable; the code review is by another person also using AI — everyone nods and merges.

The deadliest failure mode isn't AI writing wrong code. It's AI writing wrong code that looks right — types check, build passes, demo runs, PR review feels reasonable. Only an actual expert catches "no, this pattern falls over with real data volume in production."

Same AI tooling, different driver, 5–10× output gap. That gap is invisible on day 1. It's painfully visible by month 12.

"Our internal team is senior too" — the question is: senior in this domain, this stack, this integration problem? Or senior "by our company's standard"? Those two things diverged, not converged, in the AI era.

8. Four Things AI Still Can't Do — Accountability, Not Technique

Most "what AI is bad at" lists you'll read are about technical judgment: edge cases, architectural trade-offs, asking good questions. All true. But for the buyer, four other things are more immediate:

- AI won't carry your production SLA. Contracts are signed entity to entity. AI isn't on the contract. When the system goes down at 2 a.m., AI isn't who climbs out of bed to fix it.

- AI won't testify in your compliance audit. When the accounting firm, internal audit, or regulator asks "who decided this data retention policy and why does it look like this?" — someone has to be able to explain. And still able to explain three years later. AI doesn't take the stand.

- AI won't onboard the next engineer when the original one leaves. Without docs and ADRs, AI re-reading the codebase only generates a new explanation, not the actual historical context.

- AI won't pick up the phone when a customer escalates. B2B systems hit complaints. Someone has to call, meet, explain, commit, remediate. AI doesn't make phone calls.

None of these four things are a "technical capability" axis. They're all an "accountability" axis. When you hire a consultancy, those four things are what you're actually buying. Code AI can write.

9. So Should Everything Be Outsourced? — The Opportunity Cost Argument

No. This essay isn't arguing that enterprises shouldn't have internal engineers. The opposite.

The question isn't "should we build it ourselves?" — it's "which things should we build ourselves?"

Your internal engineers should write what only you can write — the differentiating IP, the algorithms tightly coupled to your proprietary data, the parts that decide your competitive edge. No consultant can help you here, because the know-how is your moat.

Generic things — employee back-offices, customer portals, HR tools, finance tools, integration layers, data sync — have proven best practices, abundant public design patterns, ready-made compliance frameworks. Outsource these. Hand AI to specialists who've done these fifty times.

The expensive thing in the AI era isn't engineer salaries. It's misallocated engineer time. Putting your strongest engineers on an HR approval workflow is taking your scarcest resource and aiming it at something that has 100 ready-made templates in the market. That same time could have built something nobody has built yet — something only you can build.

The question to ask isn't "can we build this internally?" — it's "is this worth building internally?"

10. So What Are You Actually Buying When You Hire Us?

By now the transaction is clear.

Hire the right consultants and you're not buying code. AI can write code, and writes it fast. You're buying four things:

- Judgment — what to build yourself, what to use a framework for, what to give to AI, what absolutely not to give to AI. The cost of this judgment is invisible on day 1. By month 18, the gap is 5× in price.

- Risk transfer — SLA, contractual liability, escalation paths, insurance. When something goes wrong, someone is on the hook; when compliance asks, someone testifies; when audit asks, someone explains. This is something internal DIY can't transfer out.

- Operational discipline — ADRs, automated tests, observability, code review culture, CI/CD pipelines. These belong on day 1, not patched in at month 18. The cost ratio is 1 : 10.

- AI governance — prompt template design, hallucination control, AI-generated commit traceability, root-cause attribution for production incidents. This is a brand-new engineering problem that only emerged after 2024, and an internal team that hasn't done it 50 times can't catch up in a year.

These four things together are what "the right consultancy" actually differentiates on. If you want to see how we run the four in practice, read our companion essay on the VIBE Coding workflow — that piece walks through what we do at each step, after you've decided to outsource.

Our Vibe Coding capability page lists typical engagement types and delivery patterns — useful for checking whether your project fits.

11. Conclusion — Settle The 18-Month Bill Before You Decide

Back to the weekly meeting at the start.

If you're making this decision right now — internal system, build it ourselves or hire consultants — what this essay wants to leave you with isn't an answer. It's a better way to ask the question:

Settle the 18-month bill before you decide.

Not the 4-week demo bill. Not the 3-month build bill. The bill 18 months out, with the system still running, employees still using it, audits still clearing. Once that bill is on paper, "is DIY actually saving money?" and "are consultants actually expensive?" look very different.

AI didn't make consultants cheaper. AI made picking the wrong consultant more expensive. Because in the wrong hands AI amplifies mistakes 5–10×; in the right hands it amplifies judgment 5–10×.

If you'd like us to settle that 18-month bill for you for free — to see which path your project actually fits — get in touch. We won't push you to outsource. If the math says DIY makes more sense, we'll say so.

Related Reading

Agentforce in 2026: An Outsider's 18-Month Field Notes

We haven't shipped Agentforce for a client yet — but we've spent 18 months tracking it. This post compiles failure modes from Western early adopters, Salesforce's platform evolution from Agent Builder to Testing Center to Agentforce Script, and a decision framework with code samples for enterprises preparing to launch in 2026.

VIBE Coding: A New Paradigm for AI-Driven Enterprise Application Development

We turned the knife on ourselves — replacing the external SaaS we had been using with our own EKel Finance Cloud, rebuilt via VIBE Coding. A traditional estimate would have been 4–6 months; we shipped Web, iOS, and Android in four weeks. This piece breaks down how humans and AI divide labor at every engineering stage, with the pitfalls we hit and a workflow you can take home.

Financial Services Cloud in Taiwan's Financial Industry: A Practitioner's Playbook

Our financial-services delivery experience comes from Australia — our CTO led FSC implementations at two Australian Tier 1 banks and one mid-sized bank. This article maps that experience onto Taiwan's regulations, core systems, and budget structures, giving decision-makers about to kick off a project a frank, vendor-spin-free basis for judgement.

Want to discuss your specific scenario?

A 30-minute conversation with a CTA. Based on your situation, we will answer directly: worth doing, too early, or not our fit.

We use cookies

We use strictly necessary cookies to run this site, plus optional analytics cookies (Google Analytics) to understand how visitors use it. See our Cookie Policy and Privacy Policy.